And I wonder if it is a problem of double precision in the link between GMSH and Freefem or if it is a problem of numerical computations. For a planar target, where the sputter direction is e.g.

#Gmsh parallel free#

In order to keep the size in in Freefem regarding the physics constants in USI, it works…īut, when I use a model with 35 000 nodes (4 ddl/node) the results are ZERO everywhere. FEniCS is a very capable free and open source Finite Element solver but its geometry and meshing capabilities leave.

The smallest element size is about 10 nm and the biggest about 50 µm. In GMSH I have created a mesh with 20 000 nodes (4 ddl/node) with dimensions in (else GMSH fails, there is a thin layer of 50 nm). In fact, I wanted to understand this problem because I have a model that includes mesh element with a wide size range for microtech problems. Thus, I keep the *.msh format with the 2.2 format as I use to do. most of the meshing steps can be performed in parallel. Thank you for your prompt and thorough response. The HXT algorithm is a new efficient and parallel reimplementaton of the Delaunay algorithm. So, I would be happy to understand and follow the best way to use GMSH and Freefem for 3D meshes. Select older mesh version, for compatibility of FF++Īs I read that the mesh read from gmsh is not correct for freefem and we should transform by the command tetg etc…įor the two last commands I have an error. (3) Another information saw on a forum claims that we should take care of format following : (2) As I read on an article of 2018, Freefem can’t read *.msh and only *.mesh?īut with correct use of Physical Volume or Surface it seems to me that *.msh work. (1) I would like to understand what is the difference between : The effectiveness of PUMI is demonstrated by its applications to massively parallel adaptive simulation workflows.I have some doubts on the correct use of GMSH and Freefem for 3D meshes.

#Gmsh parallel software#

Here we present the overall design, software structures, example programs, and performance results. To support the needs of parallel unstructured mesh simulations, PUMI also supports a specific set of services such as the migration of mesh entities between parts while maintaining the mesh adjacencies, maintaining read-only mesh entity copies from neighboring parts (ghosting), repartitioning parts as the mesh evolves, and dynamic mesh load balancing.

PUMI’s mesh maintains links to the high-level model definition in terms of a model topology as produced by CAD systems, and is specifically designed to efficiently support evolving meshes as required for mesh generation and adaptation. In July 2017, the new collated file format was introduced to. On the other hand, ParaView 1 is an efficient open source parallel visualization tool which has been used successfully for post hoc visualization of large scale, unstructured data sets up to several billions of dof.

#Gmsh parallel serial#

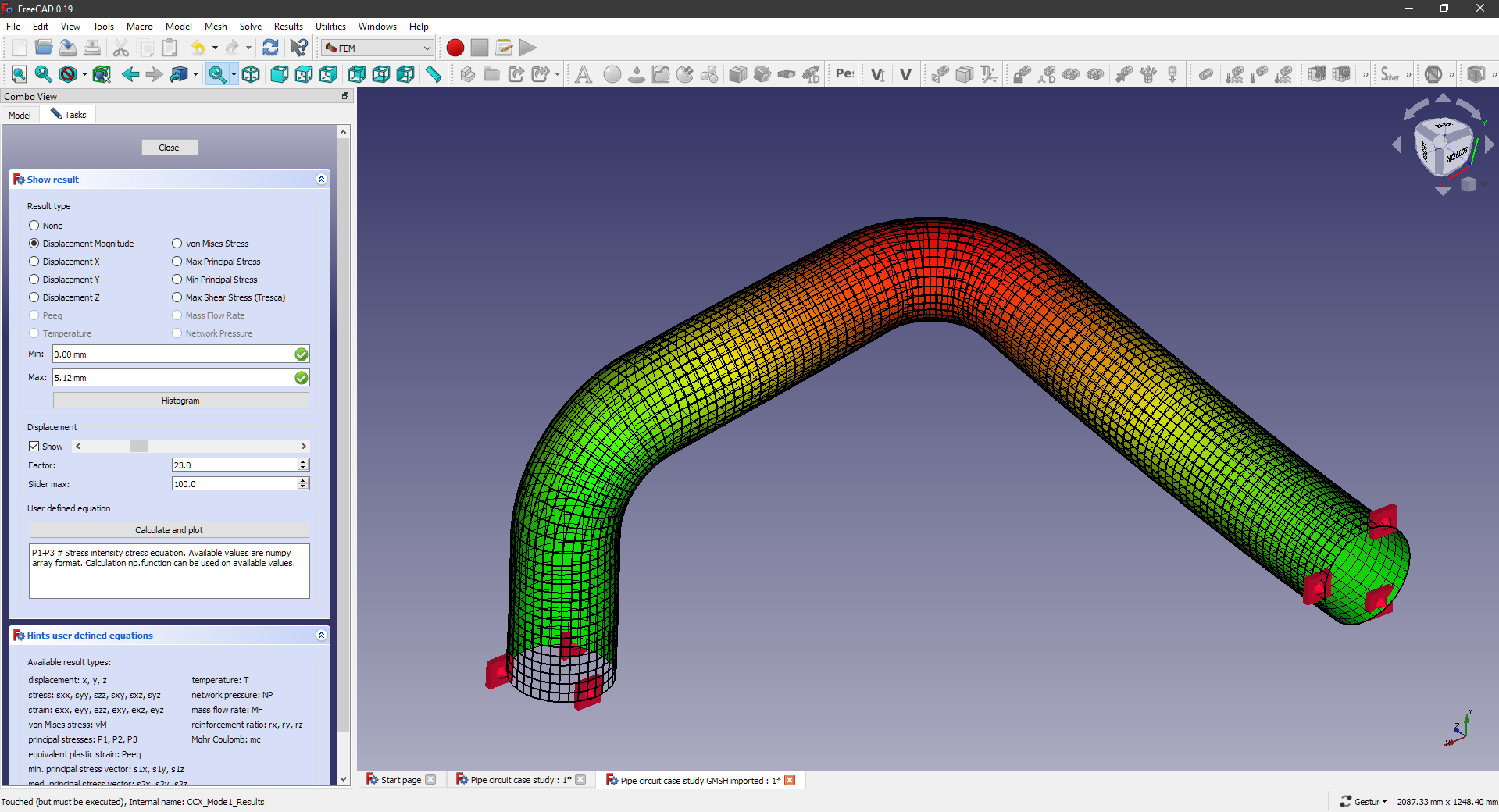

On parallel solution of linear elasticity problems. However, Gmsh is in essence a serial tool and is therefore limited to a relatively low number of dof and level of h-refinements. Processor directories are named processorN, where N is the processor number. Application of free software FreeFem++/Gmsh and FreeCAD/CalculiX for simulation of static elasticity. In PUMI, the mesh representation is complete in the sense of being able to provide any adjacency of mesh entities of multiple topologies in O(1) time, and fully distributed to support relationships of mesh entities across multiple memory spaces in a manner consistent with supporting massively parallel simulation workflows. When an OpenFOAM simulation runs in parallel, the data for decomposed fields and mesh (es) has historically been stored in multiple files within separate directories for each processor. The Parallel Unstructured Mesh Infrastructure (PUMI) is designed to support the representation of, and operations on, unstructured meshes as needed for the execution of mesh-based simulations on massively parallel computers. PUMI: Parallel unstructured mesh infrastructure.